Iterating on the prompt criteria

After running an aiR for Review job for the first time, the initial results can be used as feedback for improving the prompt criteria. The cycle of examining the results, revising the prompt criteria, then running a new job on the sample documents is known as iterating on the prompt criteria. Refer to Best practices for more information. Also see Job capacity and size limitations for details on document and prompt limits.

See these additional resources on the Community site:

- Workflows for Applying aiR for Review

- aiR for Review example project

- Selecting a Prompt Criteria Iteration Sample for aiR for Review

- Evaluating aiR for Review Prompt Criteria Performance

Navigating the aiR for Review dashboard

When you select a project from the aiR for Review Projects tab, a dashboard displays showing the project's Prompt Criteria, the list of documents, and controls for editing the project. If the project has been run, it also displays the results.

Project details strip

At the top of the dashboard, the project details strip displays:

- Project name—the name chosen for the project. The Analysis Type appears underneath the name.

- Version selector—if this is the first version of the Prompt Criteria, this will say Version 1 as a text-only field. For later versions, the version number becomes clickable, and you can choose older versions to view their statistics. For more information, see How Prompt Criteria versioning works.

- Data source—name of the saved search chosen at project creation and the document count.

- If you add or remove documents from the saved search, those changes are not reflected in the aiR for Review project until you refresh the data source.

- To refresh the data source, click the refresh symbol beside the name.

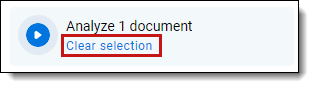

- Run button—press this to analyze the selected documents using the current version of the Prompt Criteria. If no documents are selected or filtered, it will analyze all documents in the data source.

- If you are viewing the newest version of the Prompt Criteria and no job is currently running, this says Analyze [X] documents.

- If an analysis job is currently running or queued, this button is unavailable and a Cancel option appears.

- If you are viewing older versions of the Prompt Criteria, this button is unavailable.

- Feedback icon—send optional feedback to the aiR for Review development team.

Prompt Criteria panel

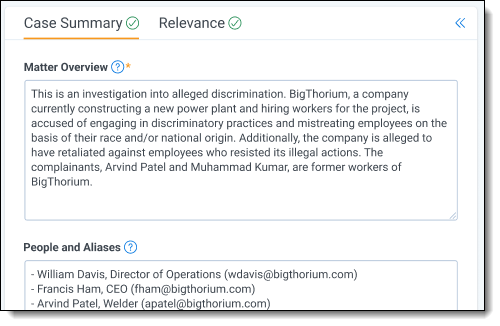

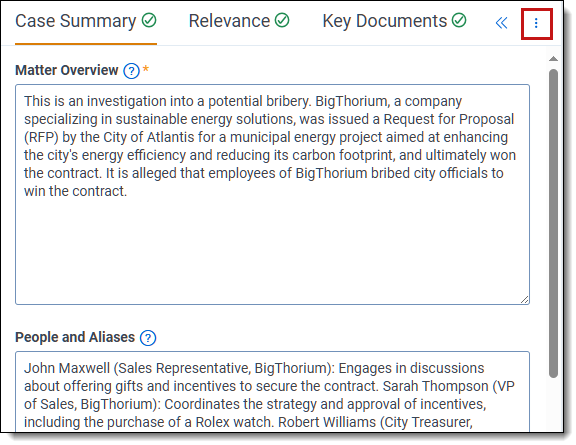

On the left side of the dashboard, the Prompt Criteria panel displays tabs that match the project type you chose when creating the project. These tabs contain fields for writing the criteria you want aiR for Review to use when analyzing the documents.

Possible tabs include:

- Case Summary—appears for all analysis types.

- Relevance—appears for Relevance and Relevance and Key Documents analysis types.

- Key Documents—appears for the Relevance and Key Documents analysis type.

- Issues—appears for the Issues analysis type.

For information on filling out the Prompt Criteria tabs, see Step 2: Writing the Prompt Criteria.

For information on building prompt criteria from existing case documents, like requests for production or review protocols, see Using prompt kickstarter.

To export the prompt criteria displayed, see Exporting Prompt Criteria.

If you want to temporarily clear space on the dashboard, click the Collapse symbol ( ) in the upper right of the Prompt Criteria Panel. To expand the panel, click the symbol again.

) in the upper right of the Prompt Criteria Panel. To expand the panel, click the symbol again.

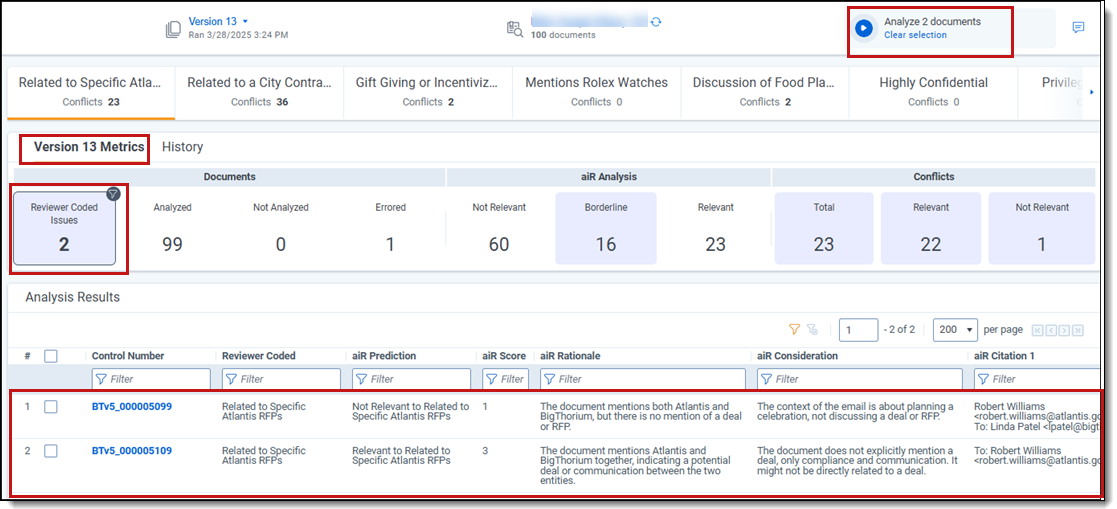

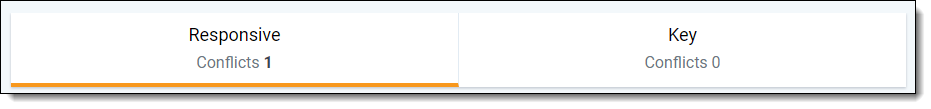

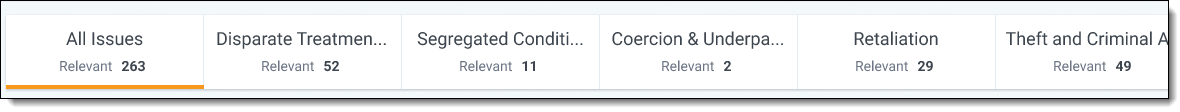

Aspect selector bar

The aspect selector bar appears in the upper middle section of the dashboard for projects that use Issues analysis or Relevance and Key Documents analysis. This lets you choose which metrics, citations, and other results to view in the Analysis Results grid.

- For a Relevance and Key Documents analysis:

Two aspect tabs appear: one for the field you selected as the Relevant Choice, and one for the field you selected as the Key Document Choice.

- For Issues analysis:

An aspect tab appears for every Issues field choice that has choice criteria. They appear in order according to each choice's Order value. For information on changing the choice order, see Choice detail fields. Additionally, the All Issues tab offers a comprehensive overview of predictions and scores for all issues and documents within the project. The total number of issue predictions displayed on the All Issues tab is calculated by multiplying the number of issues by the number of documents. For more information, see Issue Predictions section.

When you select one of the aspect tabs in the bar, both the project metrics section and analysis results grid update to show results related to that aspect. For example:

- If you choose the Key Document tab:

The project metrics section shows how many documents have been coded as key. The Analysis Results grid updates to show predictions, rationales, citations, and all other fields related to whether the document is key. - If you choose an issue from the aspect selector:

The project metrics section and analysis results grid both update to show results related to that specific issue. The total number of issue predictions in this section is calculated by multiplying the number of issues by the number of documents. For example, if there are five issues and 100 documents, there will be 500 issue predictions.

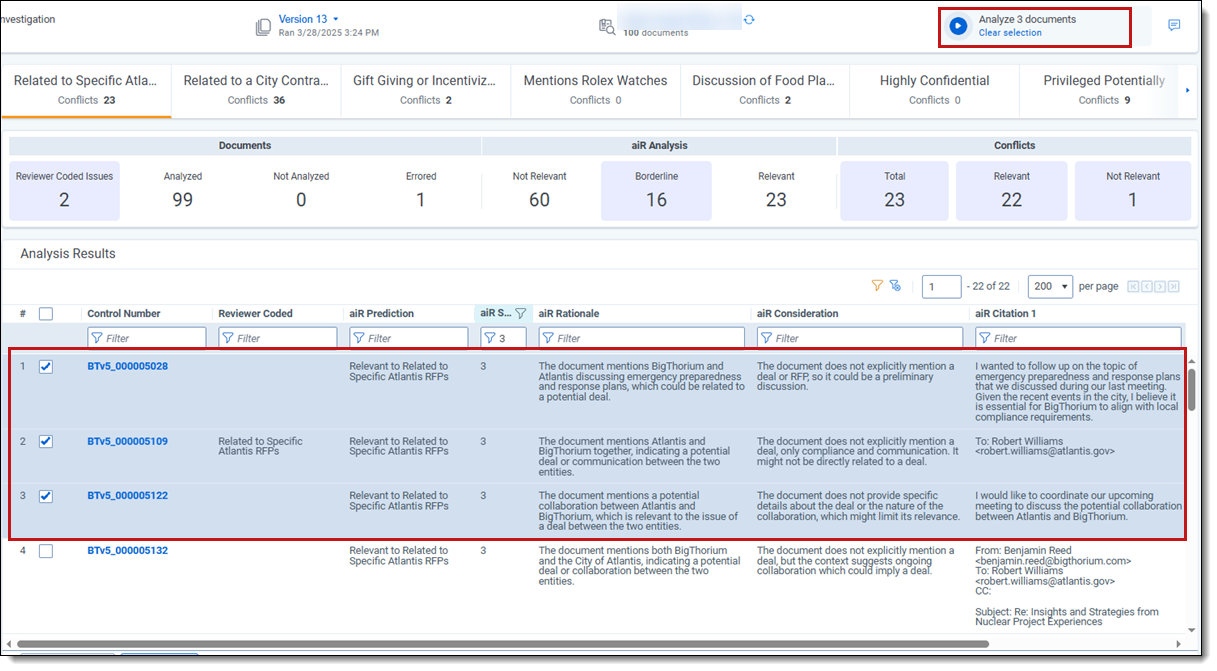

Project metrics section

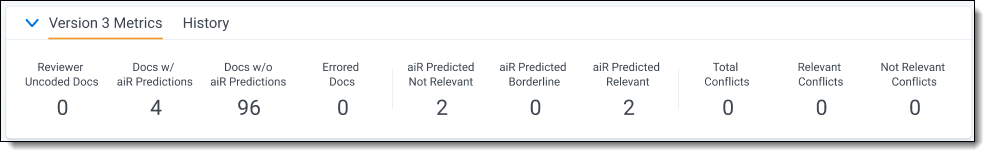

In the middle section of the dashboard, the project metrics section shows the results of previous analysis jobs. There are two tabs: one for the current version's most recent results (Version Metrics tab), and one for a list of historical results for all previous versions (History tab).

Version Metrics tab

The Version [X] Metrics tab shows metrics divided into sections:

Documents section

- Reviewer Uncoded (for Relevance or Relevance and Key Documents analysis only)—documents that do not have a value assigned in the Relevance Field. When viewing the Key aspect, this shows documents that do not have a value assigned in the Key Field.

- Reviewer Coded Issues (for Issues analysis only)—total number of documents that reviewers coded as having the selected issue. When the All Issues tab is selected, the counts for each issue are combined, which may result in a number that is more than the total document count.

- Analyzed—documents in the data source that have a prediction attached from this Prompt Criteria version.

- Not Analyzed—documents in the data source that do not have a prediction attached from this Prompt Criteria version.

- Errored—documents that received an error code during analysis. For more information, see How document errors are handled.

Issue Predictions section

This section displays for Issues analysis when the All Issues tab is selected.

- Not Relevant—issues predicted as junk or not relevant to the current aspect.

- Borderline—issues predicted as bordering between relevant and not relevant to the current aspect.

- Relevant—issues predicted relevant or very relevant to the current aspect.

- Errored—issues that received an error code during analysis.

aiR Analysis section

- Not Relevant—documents predicted as junk or not relevant to the current aspect.

- Borderline—documents predicted as bordering between relevant and not relevant to the current aspect.

- Relevant—documents predicted relevant or very relevant to the current aspect.

Conflicts section

- Total—total number of documents that have a different coding decision from the predicted result. This is the sum of the Relevant Conflicts and Not Relevant Conflicts fields.

- Relevant—documents predicted as relevant or very relevant to the current aspect, but the coding decision in the related field says something else.

- Not Relevant—documents predicted as not relevant to the current aspect, but the coding decision in the related field says relevant.

Depending which type of results you view, the metrics base their counts on different fields:

- When viewing Relevance results, the relevance-related metrics base their counts on the Relevance Field.

- When viewing Key Document results, the relevance-related metrics base their counts on the Key Document Field.

- When viewing results for Issues analysis, the relevance-related metrics base their counts on whether documents were marked for the selected issue.

For example, if you view results for an issue called Fraud, the aiR Predicted Relevant field will show documents that aiR predicted as relating to Fraud. If you view Key Document results, the aiR Predicted Relevant field will show documents that aiR predicted as being key.

Filtering the Analysis Results using version metrics

To filter the Analysis Results table based on any of the version metrics, click the desired metric in the banner. This narrows the results shown in the table to only documents that are part of the metric. It also auto-selects those documents for the next analysis job. The number of selected documents is reflected in the Run button's text. This makes it easier to analyze a subset of the document set instead of selecting all documents every time. To remove filtering, click Clear selection underneath the Run button.

You can also filter documents in the Analysis Results grid by selecting them in the table. See Filtering and selecting documents for analysis.

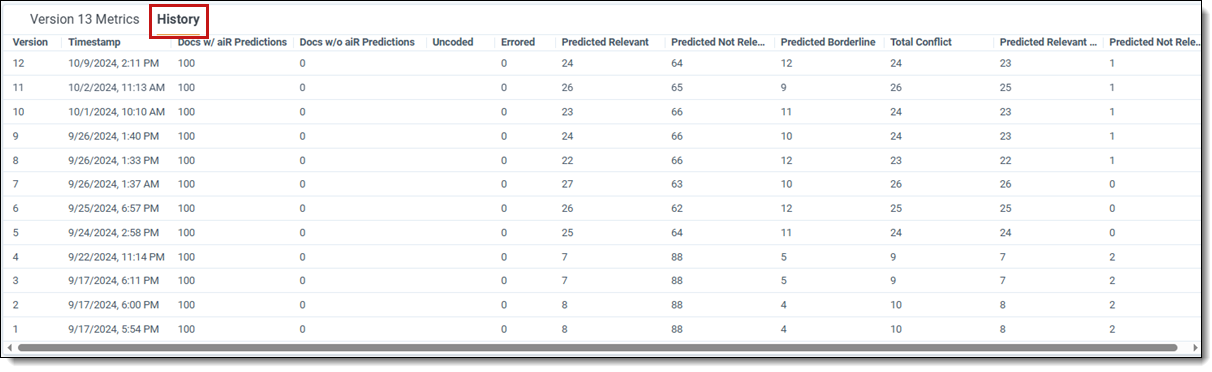

History tab

The History tab shows results for all previous versions of the Prompt Criteria. This table includes all fields from the Version Metrics tab, sorted into rows by version. For a list of all Version Metrics fields and their definitions, see Version Metrics tab.

It also displays two additional columns:

- Version—the Prompt Criteria version that was used for this row's results.

- Timestamp—the time the analysis job ran.

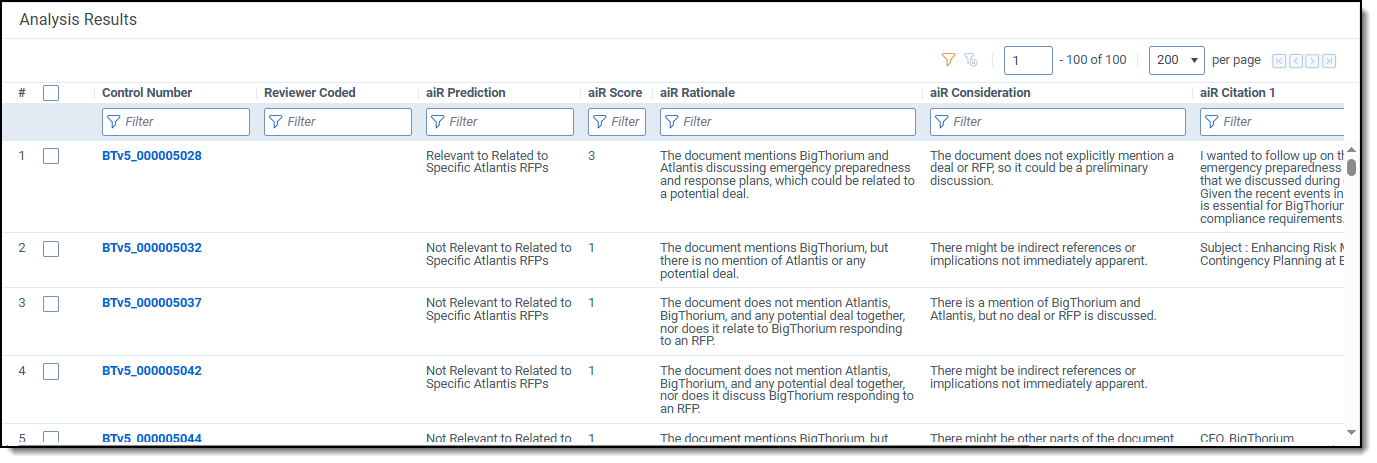

Analysis Results section

In the middle section of the dashboard, the Analysis Results section shows a list of all documents in the project. If the documents have aiR for Review analysis results, those results appear beside them in the grid.

The fields that appear in the grid vary depending on what type of analysis was chosen. For a list of all results fields and their definitions, see Analyzing aiR for Review results.

aiR for Review's predictions do not overwrite the Relevance, Key, or Issues fields chosen during Prompt Criteria setup. Instead, the predictions are held in other fields. This makes it easier to distinguish between human coding choices and aiR's predictions.

To view inline highlighting and citations for an individual document, click on the Control Number link. The Viewer opens and displays results for the selected Prompt Criteria version. For more information on using aiR for Review in the Viewer, see aiR for Review Analysis.

Filtering and selecting documents for analysis

To manually select documents to include in the next analysis run, check the box beside each individual document in the Analysis Results grid. The number of selected documents is reflected in the Run button's text. To remove filtering, click Clear selection underneath the Run button.

You can also filter the Analysis Results grid by clicking the metrics in the Version Metrics section. See Filtering the Analysis Results using version metrics.

Clearing selected documents

Click Clear selection below the Run button to deselect all documents in the Analysis Results grid. This resets your selections so the next analysis includes all documents in the data source..

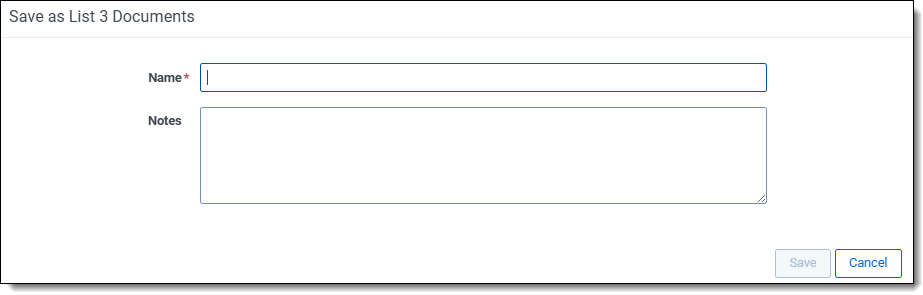

Saving selected documents as list

To save a group of documents as a list, follow the steps below.

- Select the box beside each individual document in the Analysis Results grid that you want to add to the list.

- Click the Save as List button at the bottom of the grid.

- Enter a unique Name for the document list.

- Enter any Notes in the text box to help describe the list.

- Click Save.

For more information on lists, see

Exporting Prompt Criteria

You can export the contents of the currently displayed Prompt Criteria to an MS Word file using the Export option. This can be helpful for reviewing criteria, saving it to use later, and collaborating with others on it.

- In the Prompt Criteria panel, click the More (

) icon next to the Collapse (<<) icon.

) icon next to the Collapse (<<) icon.

- Select Export.

- Click Save and Export to proceed with exporting the content.

The exported file is saved to your default browser download folder. It contains the information from all available criteria tabs for the currently displayed Prompt Criteria. Audit logs track all export actions from your project.

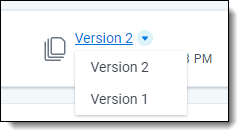

How Prompt Criteria versioning works

Each aiR for Review project comes with automatic versioning controls, so that you can compare results from running different versions of the Prompt Criteria. Each analysis job that uses a unique set of Prompt Criteria counts as a new version.

When you run aiR for Review analysis, the initial Prompt Criteria are saved as Version 1. Edits to the criteria create Version 2, which you can repeatedly modify until you finalize by running the analysis again to see the results. Subsequent edits follow the same pattern, creating new versions that finalize with each analysis run.

To see dashboard results from a earlier version, click the arrow next to the version name in the project details strip. From there, select the version you want to see.

How version controls affect the Viewer

When you select a Prompt Criteria version from the dashboard, this also changes the version results you see when you click on individual documents from the dashboard. For example, if you are viewing results from Version 2, clicking on the Control Number for a document brings you to the Viewer with the results and citations from Version 2. If you select Version 1 on the dashboard, clicking the Control Number for that document brings you to the Viewer with results and citations from Version 1.

When you access the Viewer from other parts of Relativity, it defaults to showing the aiR for Review results from the most recent version of the Prompt Criteria. However, you can change which results appear by using the linking controls on the aiR for Review Jobs tab. For more information, see Managing aiR for Review jobs.

Revising the Prompt Criteria

After you run the analysis for the first time on a sample set, use the dashboard to examine the results and refine the Prompt Criteria.

In particular, ask the following questions about each document:

- Did aiR for Review and the human reviewer agree on the relevance of the document?

- Read the aiR for Review rationale and considerations. Do they make sense?

- Do the citations make sense?

For all of these, if you see something incorrect, make notes on where aiR seems to be confused. Here are the most common sources of confusion:

- Insufficient context. For example, an internal acronym, key person, or code word may not have been defined. To fix this, add it to the proper section of the Case Summary tab.

- Ambiguous instructions or unclear language. To fix this, edit the instructions on the Relevance, Key Documents, or Issues tabs.

In general, consider how you would help a human reviewer making the same mistakes. For example, if aiR for Review is having trouble identifying a specific issue, try explaining the criteria for that issue with simpler language.

After you have revised the Prompt Criteria to address any weak points, run the analysis again. Continue refining the Prompt Criteria until results accurately predicts the human coding decisions for all test documents in the sample.

aiR for Review only looks at the extracted text of each document. If a human reviewer marked a document as relevant because of an attachment or other criteria beyond the extracted text, aiR for Review will not be able to match that relevance decision.

Increasing the job size

When aiR for Review accurately matches human coding decisions on the initial sample documents, increase the sample size. Typically, we recommend starting with an initial sample of about 50 documents, then increasing it to include another 50. However, you may find a different number works better for your project.

To increase the job size:

- Add the fresh documents to the saved search that acts as the project's data source. For more information about saved searches, see Creating or editing a saved search.

- Have a skilled human reviewer review the fresh documents. We recommend doing this before running aiR for Review, so that the reviewer is not biased by aiR's predictions.

- On the aiR for Review Projects tab, select the project.

- At the top of the project dashboard, click the refresh symbol next to the data source's name.

- In the Project Metrics section, click Not Analyzed. This selects the new documents.

- After the document count has updated, click Analyze [X] documents.

The analysis job runs on the new documents, while the previously run documents keep their old results.

After you have run the job on the larger sample, continue revising the Prompt Criteria until it returns satisfactory results. Continue to increase the job size incrementally until you feel satisfied with the Prompt Criteria. After that, use the refined Prompt Criteria on the larger set of documents. You can do this either from the dashboard, or as a mass operation.