Last date modified: 2026-Jun-05

aiR Assist (Advanced Access)

Advance Access (AA) is an opportunity to evaluate and work with Relativity features prior to the General Availability (GA) release. Relativity customers typically participate in AA programs on a feature-by-feature basis. The functionality described in this

aiR Assist is a conversational search tool integrated within RelativityOne, designed to empower legal teams to interact with their data using natural language. By leveraging advanced AI, aiR Assist helps to surface potential insights, reveal possible connections, and uncover themes more efficiently. This can enhance the process they use to analyze and interpret legal data more effectively, potentially leading to quicker understanding, better decisions, and defensible workflows when validated by users.

It works by searching the extracted text of indexed documents. Users can create up to five indexes per workspace, each supporting up to 300,000 documents. When a query is submitted, aiR Assist identifies the documents deemed most relevant and employs a large language model (LLM) to generate answers, complete with citations from as many as 25 source documents.

- aiR Assist is not available in repository workspaces.

- ARM is not supported for aiR Assist. aiR Assist permissions, indexes, conversations, and metadata mapping cannot be archived, restored, or moved using ARM.

How aiR Assist works

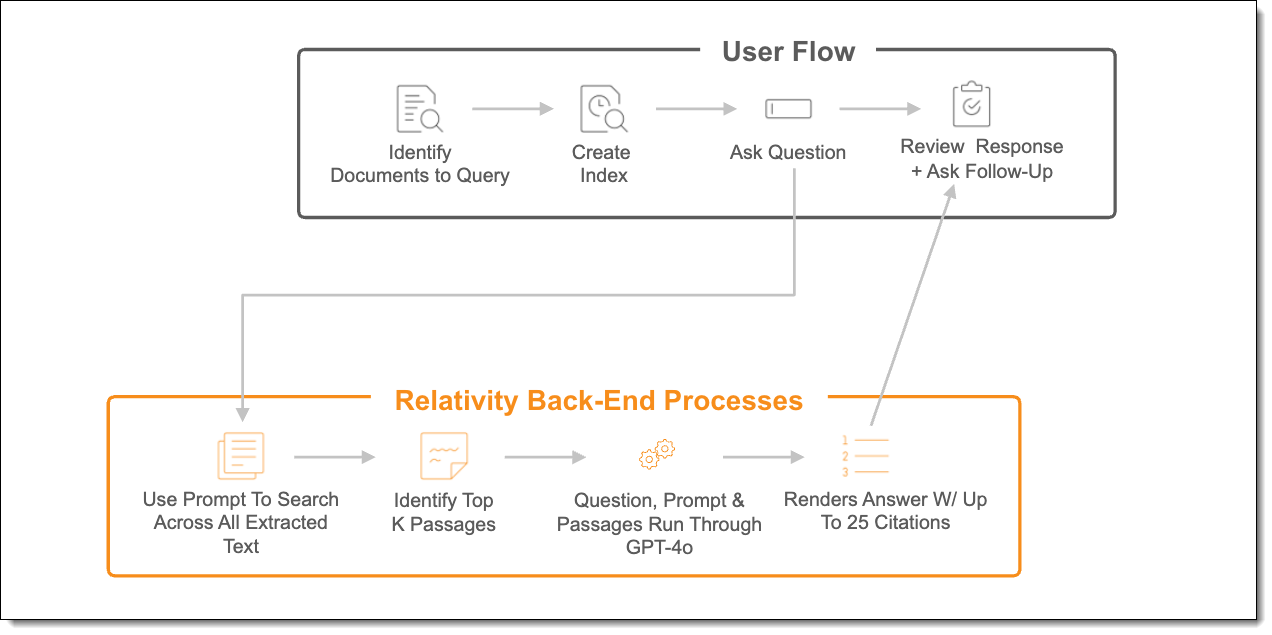

aiR Assist operates using a Retrieval-Augmented Generation (RAG) process to deliver grounded, evidence-based responses. This approach combines document retrieval with large language model generation to help support accuracy, transparency, and contextual relevance.

- Indexing the documents (indexing step)

The user identifies documents to query and creates an index. - Asking a question (question step)

The user asks a question. - Finding relevant documents (retrieval step)

Each question is matched against the text indexed from the identified documents. aiR Assist performs a similarity search to identify the most relevant content. The documents are divided into smaller passages, and the system selects results that are estimated to correspond most closely to the question. - Generating the answer (generation step)

The selected passages, along with the original question and system prompt, are passed to the LLM. The model uses this retrieved context to generate a response intended to be coherent, concise, and supported by retrieved content, including up to 25 citations and references to the original sources.

Index and document Limits

- Each index can contain up to 300,000 documents.

- A maximum of five (5) built indexes can be created per workspace from a combination of the Case Home document set and public saved searches.

- Individual documents must be 5 MB or smaller; larger files are excluded during indexing.

- Only documents with extracted text are indexed. The text must be stored in Data Grid (not SQL). Files that do not contain extracted text are excluded automatically from the index.

Understanding aiR Assist responses

aiR Assist is designed to identify and summarize potentially relevant information from large document sets through natural language interaction. The system operates on a Retrieval-Augmented Generation (RAG) architecture, which retrieves and analyzes the documents most likely to be relevant and generates a response based on retrieved content and supported by citations.

aiR Assist is designed to return contextually relevant and evidence-based information rather than performing exhaustive or “find everything” searches. It does not review every document individually, and some occurrences of keywords or topics may not be included in the response.

The RAG process works best when key evidence is found in a few focused documents. Results are less accurate if answers depend on scattered or unclear information.

Deployment and processing geographies

When using Relativity's AI technology, customer data may be processed outside of your designated geographic region. For more information, see Deployment and processing geographies.

Language support

aiR Assist currently supports English-language content only. The system has been designed and tested exclusively on English-language datasets to ensure accuracy, reliability, and consistent performance.

At this time, non-English languages are not supported, and aiR Assist has not been formally evaluated or validated for use with multilingual or non-English text. While it may operate with non-English datasets, results can vary in accuracy and completeness, and verification of cited sources is strongly recommended when working with such content.

Future updates may expand language capabilities based on performance testing and model availability.

Supported use cases

Here are some example questions targeting common use cases for aiR Assist:

| Use case | Common category | Example question |

|---|---|---|

| Early Case Insight | Finding potentially important documents | Can you find me documents that discuss potential gifts or incentives? |

| Finding documents by theme | Are there any documents mentioning fraudulent behavior of John Doe? | |

| Understanding actors and roles | Who was involved in discussions about offering gifts? | |

| Case Strategy Development | Identifying a series of events | Create a high-level timeline for events that took place before the start of Project Artemis. |

| Understanding communications and relationships between actors | Who communicated with whom about the contract terms? | |

| Deposition/Trial Preparation | Suggesting exhibits based on key criteria | List documents to use as exhibits based on [key document criteria]. |

| Confirming conversations or actions took place | Did John Maxwell send an email about the compliance policy? |

Release notes

This section includes the release information and the current functionality of the aiR Assist (Advanced Access) application.

- Feature Permissions—System Administrators now have the ability to restrict access to aiR Assist per group within a workspace using two Feature Permissions: Index Management and Prompting.

- Metadata Mapping—ability to use metadata fields (Primary Date, Email From/To/BCC/CC) during indexing to improve how aiR Assist finds and prioritizes documents.

- Reasoning summary—ability to see live updates in the interface as aiR Assist works on your question, including the searches being run and a summary of its reasoning.

- Conversation Manager—ability to create, name, and delete conversations, as well as switch between various threads and resume prior discussions.

- Indexing Progress—new feature displaying percentage progress of indexing build or rebuild in the Docs column in Index Manager.

- Indexing Data Source—added Saved Search name to Index Description in Index Manager.

- Indexing Error List—new way of displaying additional information regarding not indexed documents including the reason why a document failed to index.

- New aiR Assist button—aiR Assist is available from the left sidebar instead of under the "Ask AI" button.

- New chat user interface—improved chat experience with the aiR Assist chat panel now opening on the left, while the Documents list is always in view on the right.

- Relocation of start a new chat—the start a new chat conversation option moved to the Answer a question box.

- New Index Manager—ability to filter Indexes and Data Sources (when creating an Index).

- Answer sharing—ability to copy answer to clipboard.

- Give Feedback—ability to rate an answer with Thumbs Up or Thumbs Down icons.

- Index rebuild—existing indexes can now be quickly updated to match changes in the saved search data source.

- Intent clarification—improves aiR Assist conversations by asking follow-up questions when your request is unclear, helping guide you to the right outcome and navigate the limitations.

- Saved Search Indexing—indexing documents from public saved searches is now supported.

- Multi-turn chat—simulates human conversation by preserving context from previous exchanges within the same chat session, which enables follow-up questions and deeper exploration without the need for repetition.

- Chat persistence—chat history is now saved and available for review even after user logs off their Relativity session.

- Start a new chat—this button starts a new chat conversation below the previous one, separated by a line. The new chat conversation does not reference previous conversations for its responses.

- Search Q&A button—the button is located on the top of the document list page and available for all users in the workspace to use.

- "Ask a question" box—when the panel is open, a user can input a natural language question about the documents. Each question is evaluated and answered individually without consideration of the full conversation.

- Workspace-level context—all indexed documents available in the workspace, up to 100,000, are subject to the querying process.

- Chat history—a user’s chat history is visible during that user’s Relativity session. Once the user logs out of Relativity the chat history will be cleared.

- Citations—references and citations are provided for each response. A user can filter the Document List to the show these references or can open each reference from the chat panel in the Document Viewer. Please note, that in the initial AA release, we do not confirm that the citations are grounded in the document text like in aiR for Review.